Some time ago I was on an internal infrastructure pentest job where I found a web server that hosted the TimeLive application. I had never heard of this application, and since I was looking at a login page, I opened a browser to my favourite search engine. The following is a brief explanation of things that I shouldn’t have found.

According to the vendor web site (http://www.livetecs.com/): “TimeLive Web timesheet suite is an integrated suite for time record, time tracking and time billing software.”

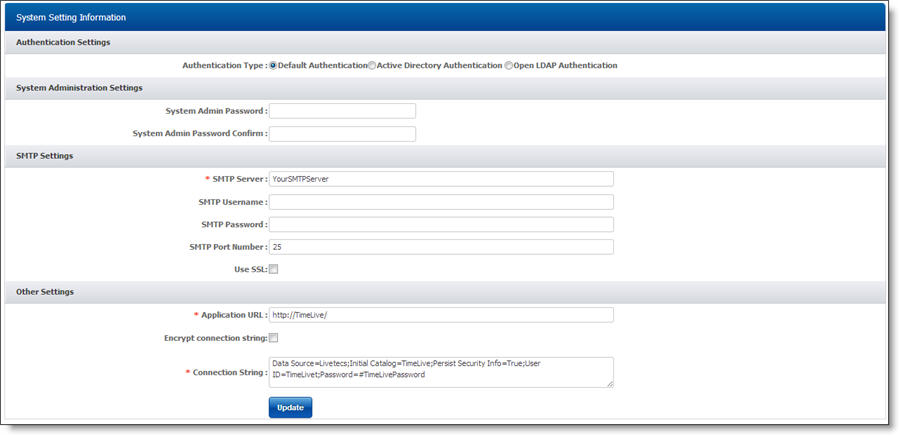

My local default password list did not have an entry for this software so my next search query was to look for default credentials. Sadly I didn’t find any, but I did find a reference (and a handy screen shot, shown below) that referred to the URI /home/systemsetting.aspx.

A close look at the screenshot showed a database connection string so I thought I would check the server…

I was able to access the URI without any authentication and was presented with a page that looked very similar to the screen shot. In fact the only difference I noticed was the database connection string. Time to fire up a database tool…

So those credentials worked – I really shouldn’t be able to access that information! There is a tick-box on the page to encrypt the connection string but the default is clearly to not have that enabled.

A closer look at the page showed me two text input boxes to specify (and confirm) the System Admin password. There was no box to provide the current System Admin password(!).

There were also some fields relating to the SMTP settings.

Without knowing anything about how the client used that service I decided it was probably not a good idea to play with the settings so I went to tell the client contact about the database connection string issue. The only way he was able to lockdown access to that page was by altering the filesystem Access Control Lists (ACLs) for the file systemsetting.aspx. The application had no method for restricting access. The contact also stated that the password change functionality would have worked.

After I had finished the pentest job and the report was completed I thought I would check the latest version of TimeLive to see if the database connection string issue was still present.

I downloaded a trial version and installed it into a Windows virtual machine. This version was several releases newer than the one I had seen so I wasn’t optimistic. However the same URI gave me the database connection string but wait…what’s this?

The TimeLive application bundles it’s own MS-SQL database environment and a default install uses the sa account. I connected to the database (reading the connection details from the web page) and confirmed that I could access xp_cmdshell. I (command)-promptly ran whoami and found that yes, I was SYSTEM on the host.

I remembered what my client contact had set about having to modify the underlying Operating System (OS) file ACL permissions to prevent access, so I had a look at the ACLs on several application files. Any local user logged into the host had full permissions on all files belonging to the application, with the exception of the actual database files (which are created by the database engine). Within one of those files I found the database connection string displayed in the web browser, so configuring the web server to block access to /home/systemsetting.aspx would not prevent local host users from being able to elevate their privileges.

I had a quick play with the options offered by systemsettings.aspx and was able to make changes without being logged in. These settings included an email address (presumably where system alerts are sent) and the authentication options for the application. Looks like it can hook into Active Directory (either Microsoft’s or OpenLDAP), so a bad guy could either specify a non-existent login server (to cause a Denial of Service condition), or presumably set up their own that replies to all logins as OK, while capturing those usernames and passwords for later use.

As an added bonus, the application defaults to HTTP and not HTTPS, meaning that any data transmitted over the network between clients and the server is not encrypted.

As I was browsing the application files I noticed the word upload. I though “that’s worth a quick look” ![]()

I thought I would look for horizontal privilege escalation so I created two different low-level user accounts to see if user1 could access files from user2. Not being familiar with the application I had to play around with the application before I found where a user could upload files.

After uploading a test file (there was no limitation on file types as the upload feature was for generic project resources) I searched for the file on the file system and checked the ACL settings. Thanks to inherited permissions, uploaded files had “Read and Execute” enabled.

I found that it was possible to upload a .aspx file (as a low-level user) that included VisualBasic code to run calc.exe. When I browsed to the uploaded file, it happily ran it, running under the NETWORK_SERVICE account (which is what the web server was configured to use for applications). It turns out that you don’t need to be logged into the application to access that page, so if a user uploads a maliciously crafted file (by being tricked by an attacker, via malware or by that user being malicious) an unauthenticated attacker simply needs to browse to that content to trigger the coded behaviour.

As a result of this, a security advisory was sent to the vendor, and the following CVEs assigned:

* CVE-2014-1217

* CVE-2014-2042

The moral of this story is that it is important to examine the properties of files installed by an application, as well as the configuration settings used by default. A quick Internet search for the application name and terms like default password or configuration might reveal some interesting results and so is also worth doing.